Why Personality Matters More Than Most Leadership Books Suggest

If you spend enough time reading leadership literature, attending leadership conferences or scrolling through educational social media, you soon encounter the very same message: effective leadership is primarily about behaviours. Develop the right habits, follow the right systems, adopt the right strategies and success will follow.

There is some truth in this, of course. However, after spending years visiting well over one hundred schools annually, both in the UK and internationally, I have become increasingly convinced that one factor receives far less attention than it deserves: personality. Temperament shapes leadership more profoundly than many of us would like to admit!

This is not because personality determines whether someone becomes an effective leader. If anything, the opposite seems true. Some of the most successful Heads of Department I have encountered could not be more different from one another! Some are warm, nurturing and relationship-driven. Others are analytical, highly structured and relentlessly focused on standards. Some are visionaries who seem permanently dissatisfied with the status quo (e.g. me). Others quietly build strong cultures through consistency, patience and attention to detail.

What interests me is that these differences are not random. As I move from school to school, talking not only to Heads of Department but also to the colleagues who work for them, I repeatedly notice the same pattern. The very qualities that make leaders successful often become the source of their greatest difficulties. The highly innovative leader can create instability. The highly organised leader can create bureaucracy. The highly supportive leader can avoid difficult conversations. The highly charismatic leader can stop listening…

I visit around 100 schools a year in my work as a consultant, and the more schools I visit, the more convinced I become that leadership is as much about understanding ourselves as it is about understanding pedagogy, curriculum or management.

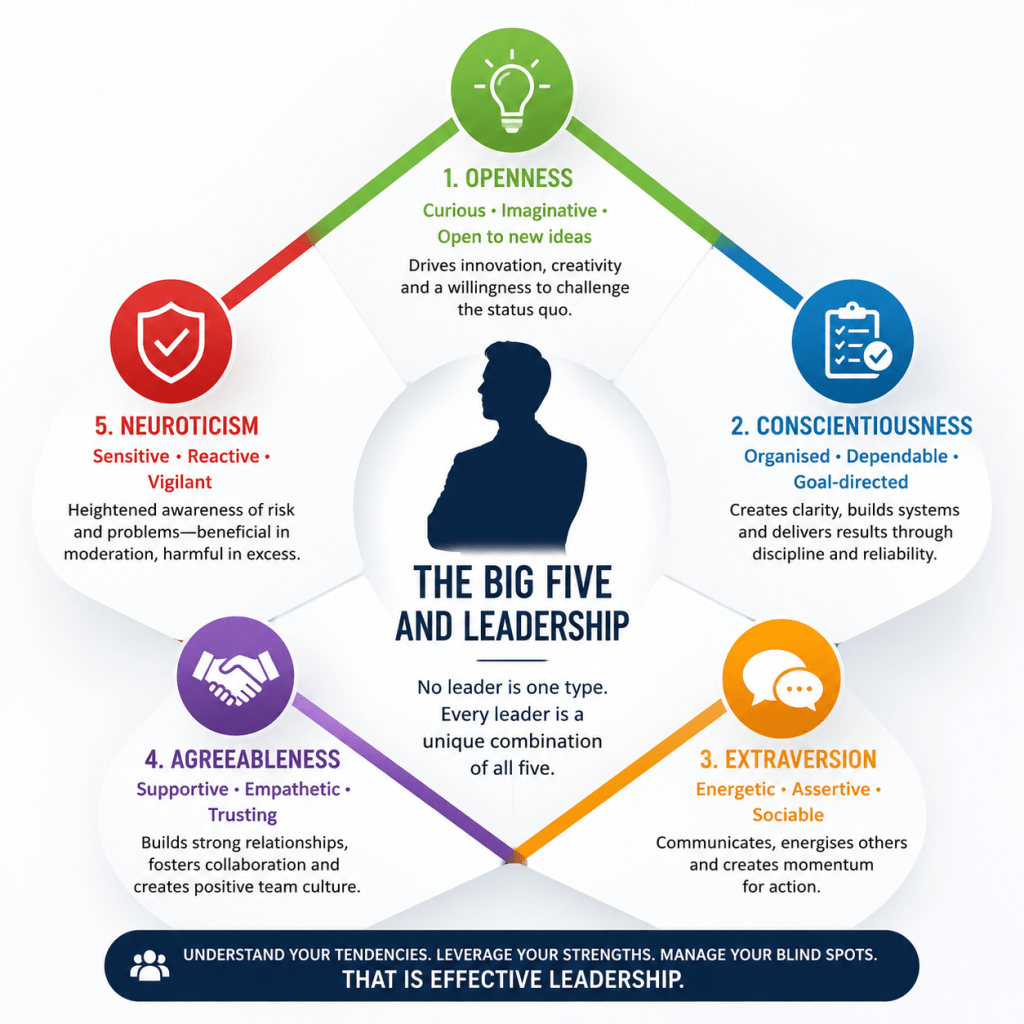

The Big Five Personality model

The Big Five Personality Model is the most widely researched and scientifically supported framework for understanding human personality. Developed through the work of psychologists such as Lewis Goldberg, Paul Costa and Robert McCrae during the 1980s and 1990s, it emerged from decades of research analysing the personality traits people use to describe themselves and others Rather than placing individuals into fixed categories, the model measures personality across five dimensions: Openness to Experience, Conscientiousness, Extraversion, Agreeableness and Neuroticism. Its popularity stems from its strong empirical foundation, cross-cultural validity and ability to predict important outcomes such as leadership effectiveness, job performance, wellbeing and interpersonal relationships.

Why the Big Five Has Stood the Test of Time

Whenever personality is discussed, people often think of colourful quizzes that place individuals into neat categories. Such frameworks can be entertaining, but most have relatively weak scientific foundations. The Big Five is different. Why?

Emerging from decades of research conducted across multiple countries and cultures, it is widely regarded as the most robust and empirically validated model of personality currently available (Costa & McCrae, 1992; John, Naumann & Soto, 2008). One reason it remains so influential is that it consistently predicts meaningful outcomes, including workplace performance, leadership effectiveness, job satisfaction, wellbeing and interpersonal relationships (Barrick & Mount, 1991).

What makes the framework particularly appealing is that it does not place people into boxes. You are not an “Openness person” or a “Conscientiousness person”.Instead, each of us possesses all five traits to varying degrees. Think of them as five sliders rather than five categories. Every leader displays a unique combination of these dimensions, and it is that combination that influences how they communicate, make decisions, respond to pressure, manage conflict and inspire others. For instance, as a middle manager, I was high in Openness to Experience and Extraversion, moderate in Agreeableness and Conscientiousness and low in neuroticism (although some of my colleagues may disagree on this one…).

As you read the descriptions below, you will almost certainly recognise aspects of yourself in several of them! In fact, if you only recognise yourself in one, you are probably oversimplifying your own personality or not being completely honest with yourself….

Openness to Experience: The Innovator

Openness reflects intellectual curiosity, creativity, imagination and a willingness to explore new possibilities. People who score highly on this dimension are naturally drawn to ideas, innovation and experimentation.

In leadership, this often manifests itself as a tendency to challenge assumptions and ask uncomfortable questions. Why are we doing it this way? Is there a better alternative? Are we confusing tradition with effectiveness?

Research consistently links Openness with creativity, innovation and strategic thinking (Judge et al., 2002), which is hardly surprising. Organisations improve because somebody somewhere decides that existing practice is not good enough. Most talented language learners score highly in this trait.

Looking back on my own career, I can see this trait at work repeatedly. When I first began questioning many of the dominant assumptions surrounding communicative language teaching and started advocating approaches that eventually evolved into EPI, I was frequently told that I was over complicating matters, challenging setttled wisdom or trying to fix something that was not broken. Had I been less open to alternative possibilities, I would probably have accepted those criticisms and moved on.

However, Openness comes with risks: one of the most common leadership mistakes I observe amongst highly innovative leaders is that they become addicted to novelty. They move from initiative to initiative, conference to conference, idea to idea, often leaving colleagues exhausted in their wake. Innovation is exciting. Implementation is not. Yet successful departments are built far more often through consistent implementation than through constant innovation… a statement that may not win me many friends amongst educational influencers!

For MFL leaders, Openness can be enormously beneficial because our profession is constantly evolving. New specifications, new technologies, new research findings and now AI are transforming the landscape. Leaders who remain intellectually curious are often better equipped to navigate these changes. The challenge lies in ensuring that curiosity serves improvement rather than distraction.

Conscientiousness: The Architect

If Openness generates ideas, it is Conscientiousness that turns them into reality!This dimension reflects organisation, self-discipline, reliability and persistence. In the literature, this is the personality trait most consistently associated with professional success across occupations (Roberts et al., 2009).

Highly conscientious leaders create clarity. Expectations are explicit, systems function properly and deadlines are met. Staff know what is expected of them and can generally trust that commitments will be honoured.

Whenever I think about Conscientiousness, I am reminded of the years spent developing Sentence Builders, refining resources and building The Language Gym. None of those projects emerged from flashes of inspiration alone. They required thousands of hours of repetitive, often unglamorous work. The reality is that ideas are cheap. Execution is expensive and requires many hours of work and the ability to delegate effectively – something not many individuals are keen to do.

Conscientiousness also has a darker side, though. Many conscientious leaders become victims of their own strengths. Because they value organisation and precision, they can gradually drift towards perfectionism, excessive control and bureaucratic thinking. They begin to assume that every problem can be solved through another procedure, another spreadsheet or another monitoring process.

I have occasionally encountered departments where systems became so elaborate that they seemed to consume the very energy they were supposed to save. From marking to assessment, from predicted-grades monitoring to retrieval-practice schedules.

For MFL leaders, Conscientiousness is invaluable when designing curricula, sequencing content, ensuring progression and maintaining consistency across classes. However, language learning remains a profoundly human endeavour. Not everything that matters can be measured and not everything that can be measured matters.

Extraversion: The Influencer

Extraversion refers to sociability, assertiveness and the tendency to gain energy from interaction with others. Research consistently associates Extraversion with leadership emergence (Judge et al., 2002). In simple terms, extraverts are more likely to become leaders because they are often more willing to speak up, take charge and influence others. Thst’s me through and through.

Much of my professional life now involves standing in front of audiences ranging from twenty teachers to several hundred. I enjoy those interactions enormously. I enjoy debating ideas, provoking discussion and engaging with colleagues. Yet one lesson I have learned over the years is that visibility should never be confused with wisdom.

Some of the most insightful teachers I have encountered barely speak during workshops. They listen, reflect and contribute only when they have something genuinely valuable to add. Highly extraverted leaders can sometimes fall into the trap of believing that because they communicate effectively, they are also listening effectively. Unfortunately, the two are not always correlated.

For MFL departments, Extraversion can be particularly useful because language subjects often require enthusiastic advocates. Whether promoting uptake, defending curriculum time or building departmental identity, leaders frequently need to persuade others of the value of languages.The challenge is remembering that some of the department’s most important voices may not be the loudest.

Agreeableness: The Connector

Agreeableness reflects empathy, kindness, cooperation and concern for others.Highly agreeable leaders tend to create environments characterised by trust, psychological safety and collegiality. Their staff often feel valued, supported and respected.

Given the mounting pressures facing education, it is difficult to overstate the importance of these qualities. One aspect of my career that has surprised me over the years is the sheer number of teachers who contact me seeking advice, reassurance or support. Many of these individuals are complete strangers. Whenever possible, I respond. Not because I feel obliged to, but because I remember how isolating teaching can sometimes feel, particularly early in one’s career.

However, leadership is not friendship, and this is where Agreeableness can become problematic.The leaders I have seen struggle most with difficult conversations are often highly agreeable individuals who genuinely care about their colleagues. They worry about upsetting people. They delay challenging conversations. They hope problems will resolve themselves.

Unfortunately, they rarely do. For MFL departments, Agreeableness can help create cultures in which teachers willingly share resources, collaborate and support one another. Such environments are often highly enjoyable places to work. Yet harmony and effectiveness are not synonymous. A department can be warm, supportive and collegial whilst simultaneously tolerating weak practice.

The most effective leaders somehow manage to combine kindness with accountability. This what I love about Tom Ball, my last Head of Department ever, the only one I have ever worked under, who managed to combine both consistently.

Neuroticism: The Sentinel

Of all the Big Five dimensions, Neuroticism is probably the most misunderstood.The term sounds negative, yet research suggests that moderate levels of Neuroticism can actually confer important advantages (Nettle, 2006). Individuals who score moderately highly on this dimension often notice problems earlier, prepare more thoroughly and think more carefully about risks. In other words, they worry for a reason.

Throughout my career I have occasionally found myself at the centre of heated professional debates. Whether discussing methodology, curriculum design or aspects of language acquisition research, criticism has never been in short supply. What has always struck me is how differently people respond to criticism. Some become consumed by it. Others move on remarkably quickly.

Leaders who score very highly on Neuroticism often struggle because they ruminate. They replay conversations. They imagine worst-case scenarios. They carry professional worries home and struggle to switch off.

At moderate levels, however, Neuroticism can be useful. It can prevent complacency. It can encourage preparation. It can promote vigilance. Hence, for MFL leaders, a moderate degree of concern about uptake, examination outcomes or curriculum quality is probably healthy. Complete indifference would hardly be desirable.

The key, as always, is balance, isn’t it?

The Leadership Lesson Nobody Talks About

After visiting hundreds of schools and observing countless leaders, I have become increasingly convinced that leadership failure rarely stems from a lack of strengths. More often than not, it stems from strengths taken too far. The visionary can becomes chaotic, the organiser can become bureaucratic, the communicator can becomes overpowering, the nurturer can become too permissive and, finally, the vigilant leader can become too anxious and burn out.

In other words, our greatest strengths often contain within them the seeds of our greatest leadership challenges. This is why self-awareness matters so much.

Mind you: the goal is not to become somebody else. The goal is to understand your own tendencies sufficiently well that you can compensate for them. The innovative leader needs discipline. The conscientious leader needs flexibility. The extravert needs to listen. The agreeable leader needs courage. The vigilant leader needs perspective.

Perhaps that is the most valuable lesson the Big Five has to offer. Not a set of labels or a collection of boxes to fit yourself of others in, but rather a useful framework for understanding why we lead the way we do and how we might become slightly better versions of ourselves.

After all, leadership is rarely about perfection. As I always say in my workshops, it is usually about recognising our predictable mistakes before they become costly ones.

You must be logged in to post a comment.